Background agents work across

the entire delivery lifecycle.

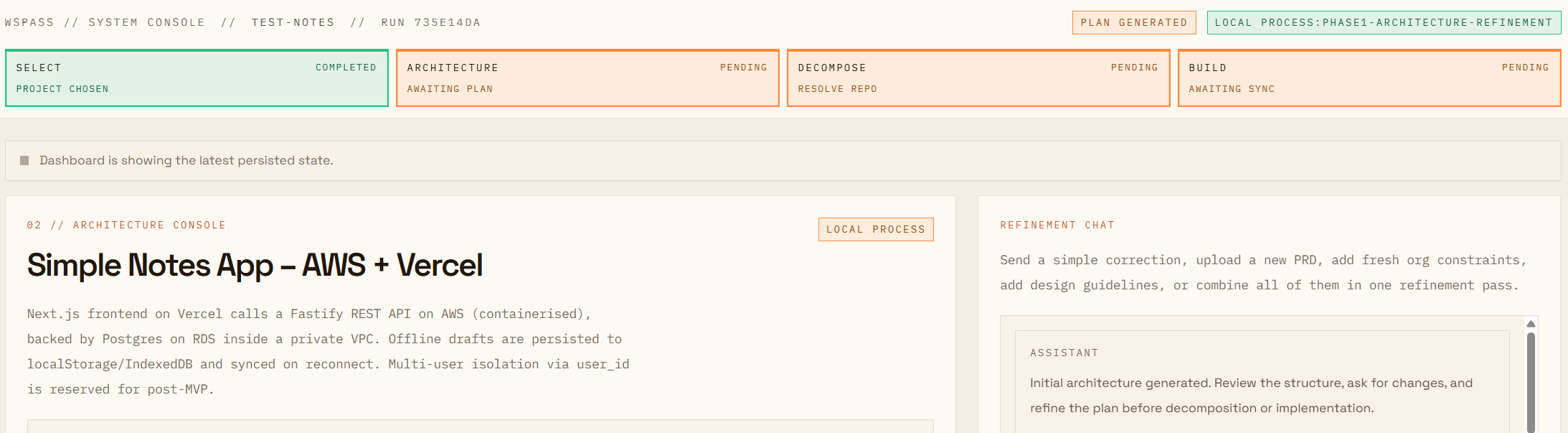

AI didn’t just speed up coding — it changed the operating pressure on the whole system. When agents can generate plans, code, and PRs at machine speed, the bottleneck shifts to what protects the business: verification, governance, and uptime. The question isn’t “can agents ship?” — it’s: how do we keep velocity without sacrificing quality, compliance, or reliability?

Work piles up at Verify — not because engineers are slow, but because verification was never designed to be parallelized. PASS reimagines DevOps for agents: shift-left, enforced guardrails, and traceability.

Individual speed ≠ organizational velocity.

You rolled out AI copilots for developers. They code faster. PRs flood in.

Yet cycle time doesn’t improve. Review queues grow. Incidents creep up. Tech debt compounds.

Because gains compound with the individual, not the organization. If you accelerate code creation without redesigning verification + governance, you entrench the bottleneck. This is the false summit.

The false summit

Establish background agent primitives

Find your system bottlenecks

Scale your compliance factory

The answer: agents that review

before any human has to.

⬡ ✳ ✦ ○

A copilot needs a developer’s attention. A background agent doesn’t. It runs in a cloud execution environment with tools: repo access, test runners, linters, security scanners, deployment hooks, and observability. It completes work asynchronously and produces structured outputs you can review.

Trigger it from Slack, a PRD submission, a webhook, or a scheduled job. Walk away. Come back to artifacts, PRs, and a decision point. Delegate, walk away, decide later.

| Copilot developer | PASS (Background Agent) | |

|---|---|---|

| Where it runs | One person, one screen | Cloud — triggered by any event, parallelized |

| How triggered | Manually assigned | Intake events, schedules, Slack, API call — any signal |

| Scope | Single submission | Across entire queue, concurrently |

| Developer role | In the loop — typing, patching, context-switching | On the loop — review outputs, approve high-impact actions |

Unattended agents need more than a prompt. They need standards that make autonomy safe: sandboxed execution, integration context, requirements checks, cost caps, and an audit trail. These primitives are what separate a demo from production.

Agent has full document environment

The agent runs in a sandbox with repo access and the same toolchain a human relies on: typecheck, lint, tests, security scanning, and CI parity. This is how you let an agent work without turning your codebase into a scratchpad.

Enforced at runtime, not by prompt

Agents are actors in your system. They need the same controls as human contributors — identity, permissions, audit trails. Governance enforced by a system prompt ("please don't approve edge cases") is a suggestion. Governance enforced at the execution layer — scoped credentials, deterministic command blocking — is actual governance.

The problem isn’t agent velocity — it’s missing guardrails. When code changes arrive at machine speed, the system breaks where quality is enforced: verification, secrets handling, architectural boundaries, and observability. Agentic DevOps exists to protect uptime while keeping velocity.

Verification queues that stall shipping

PRs pile up in CI and review. PASS shifts verification left: agents run lint/typecheck/tests/security checks before a human spends attention. Humans focus on judgment, not on re-running the same pipeline failures.

Credential and integration drift

Unattended systems fail when they lack the tools they need. PASS makes requirements explicit: missing tokens, missing permissions, or missing integration context becomes a visible artifact. No silent failures. No “it worked on my machine.”

Agentic DevOps turns delivery into a factory. Instead of humans standing at every station, agents do the repetitive execution work and hand humans a decision point. We use PRD → plan → issues as the concrete demo — but the reimagined workflow is the control layer that keeps velocity safe.

- Every PRD becomes a persisted architecture pack (reviewable artifact)

- Work is decomposed into issue-sized tasks with dependencies

- Agents pre-verify changes (lint/typecheck/tests/security) before human review time

- Audit trail logs what the agent did, what it touched, and why actions were allowed

- Cost caps + observability prevent runaway execution and make failures diagnosable

Your engineers aren’t in the queue.

They’re on the queue.

The factory runs unattended: plan → verify → propose. Humans observe, set thresholds, and approve the actions that carry accountability. The goal is simple: protect uptime without reducing organizational velocity.

What we measure: lead time (PRD→issues/PR), verification pass rate, retry loops, token spend per run, and time-to-diagnosis when something fails.

One decision must

remain human — and why.

PASS takes on real cognitive and operational responsibility: turning intent into a plan, generating structured artifacts, running verification, and staging work as issues or PRs. But the architecture is explicit about where AI authority terminates.

Approve syncing the backlog to the repo

PASS can generate an architecture pack and decompose it into issue-sized work.

But before it writes anything into the system of record (GitHub issues), a human must approve the backlog.

This decision stays human because the cost of being wrong is real: noisy or mis-scoped tickets waste engineering cycles,

create coordination debt, and can accidentally capture sensitive requirements in the wrong place.

The agent can propose. The human owns the accountability.

Stop-line: the system may draft and stage work, but issue sync requires explicit approval.

At scale, what breaks first isn’t generation — it’s governance: bad decompositions flooding repos, missing context, and no visibility into why the agent produced a task. PASS treats approval + traceability as first-class so velocity doesn’t turn into backlog chaos.